Episode 25

Engineering resilient systems: Rescuing old treasures and unleashing modern capabilities

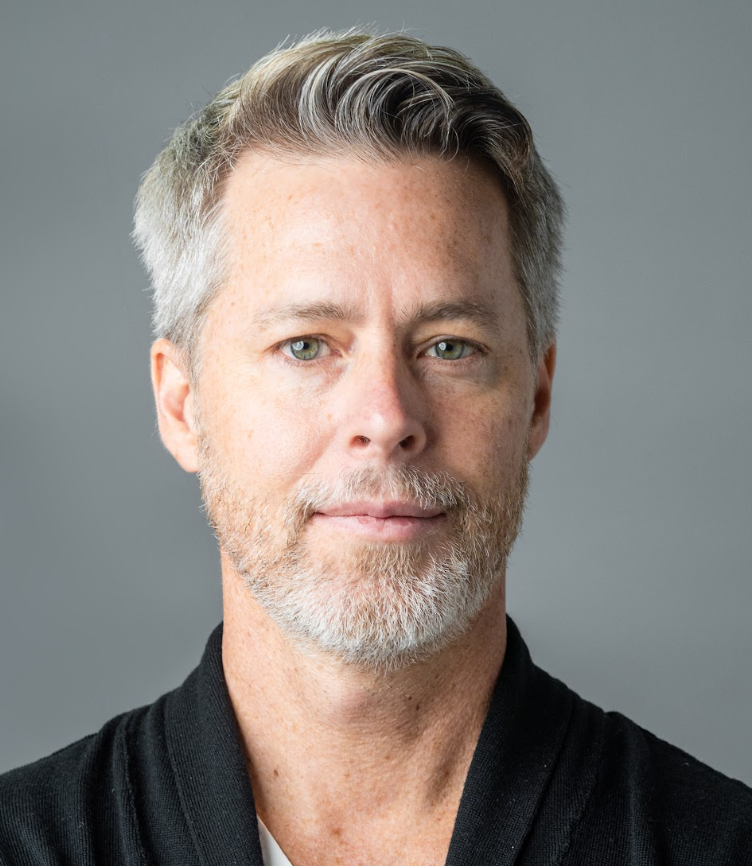

Marianne Bellotti

Author, Engineering Leader, Systems Geek

Are legacy systems just outdated systems? The answer is, it’s complicated…

In this episode, we’re joined by Marianne Bellotti, author of “Kill It With Fire”. Marianne has built data infrastructure for the United Nations and tackled some of the oldest and most complicated computer systems in the world as part of the United States Digital Service.

Join as we discuss:

Marianne’s fascinating and often surprising insights from the field.

The art of balancing innovation with preserving the best of legacy systems.

Managing technology deployments, pushing back on load test results, and setting realistic expectations throughout a modernization project.

Why AI has potential to change the world of software engineering.

A podcast for architects and engineers who are building modern, data-intensive applications and systems. In each weekly episode, an innovator joins host David Joy to share useful insights from their experiences building reliable, scalable, maintainable systems.

David Joy

Host, Big Ideas in App Architecture

Cockroach Labs

Latest episodes

Distributed Systems, Linkerd, and the Cost of Network Calls with William Morgan from Buoyant

William Morgan

CEO @ Buoyant, creators of Linkerd

Making Software as Durable as Data with Peter Kraft from DBOS

Peter Kraft

co-founder of DBOS

Breaking the Pillars: Rethinking Observability with Charity Majors

Charity Majors

Co-founder and CTO of Honeycomb.io and co-author of Observability

How to Transform Dev Workflows with CI/CS and AI Agents with Tomer Karin

Tomer Karin

Embedded Software Architect

AI, Market Cycles, and the Systems Built to Outlast Them with Cockroach Labs CEO & Co-founder Spencer Kimball

Spencer Kimball

CEO & Co-founder Cockroach Labs

How to Scale Data Infrastructure from Startup to Enterprise

Nishant Raman

Data Engineer at FinTech Company

How to Build an AI-Native Organization

Peter Mattis

Co-founder and CTO/CPO at Cockroach Labs

Inside Infrastructure as Code with Pulumi’s Founder & CEO

Joe Duffy

Founder/CEO at Pulumi

Inside Ericsson: How AI and Automation Are Shaping Telecom

Anand Bajaj

Chief Architect - 5G Network Slicing at Ericsson

Unboxing the Cloud: AI, Microservices, and Resilient Databases

Jim Hatcher

Solution Engineer at Cockroach Labs

Strategic AI and Cloud Solutions: GitHub’s Blueprint for Modern Development Success

Ari LiVigni

Senior Cloud Solutions Architect at GitHub

Cloud Architecture in the Public Sector: Balancing Innovation and Security

Nick Mayer

Principal Cloud Architect at Maximus

GenAI Meets Celebrity: Inside Cameo’s Journey from Startup to Stardom

Dom Scandinaro

CTO at Cameo

The journey from mainframe to adopting generative AI with Equifax’s Senior Network Architect

Samarth Shah

Senior Network Architect at Equifax

Modernizing your cloud strategy with OneStream’s Senior VP of Cloud Architecture

Ryan Berry

Senior VP Cloud Architecture at OneStream Software

Driving digital transformation with Chief Architect at Altimetrik, Ignacio Segovia

Ignacio Segovia

Chief Architect at Altimetrik

Discussing the Patterns of Distributed Systems with Unmesh Joshi

Unmesh Joshi

Principal Consultant at Thoughtworks and Author of Patterns of Distributed Systems

How to simplify your software architecture

Rob Reid

Technical Evangelist at Cockroach Labs

Behind the scenes with Vimeo’s Director of Enterprise Architecture

Sachin Joshi

Director of Enterprise Architecture at Vimeo

How to leverage real-time data processing for enterprises

Andrew Sellers

Head of Technology Strategy at Confluent

Inside the Mind of the Chief Architect at Index Exchange

Joshua Prismon

Chief Architect at Index Exchange

Solving for Scale: Real-time Retail Experiences with Endear's CTO

JP Grace

Endear

Data, Acquisitions, and AI: Insights from FiscalNote's CTO

Vlad Eidelman

CTO and Chief Scientist at FiscalNote

Discussing Data Trends in the AI Era

Gajanan Chinchwadkar

CTO at Hypermode

Unwrapping Moonpig: Architectural Insights into Personalization and Scalability

Alexis Lowe

Principal Engineer at Moonpig

Solving for data intelligence at scale

Madalina Tansie

Chief Technology Officer at Collibra

Simplifying solutions architecture with Brian Johnson of Booz Allen Hamilton

Brian Johnson

Sr. Solutions Architect at Booz Allen Hamilton

How to make your applications smarter

Rod Senra

VP of Engineering at Loadsmart

Scaling for 2 billion events per day with Principal Software Engineer at Red Ventures

Majid Fatemian

Principal Software Engineer, Data Platform at Red Ventures

The data behind digital marketing: A conversation with Bluecore’s Software Architect

Mike Hurwitz

Software Architect at Bluecore

A Lesson in Scaling: How Kami handled 25x growth with CTO and Co-Founder Jordan Thoms

Jordan Thoms

CTO & Co-Founder at Kami

Mastering Multi-Cloud with PwC’s Erol Kavas

Erol Kavas

Director at PwC Canada

From FedEx to Five Guys: Designing digital experiences with Yext’s VP of Software Engineering

Matt Bowman

VP of Software Engineering at Yext

Reliability and scalability in a data-driven world with Fivetran’s VP of Platform Engineering

Mike Gordon

VP of Platform Engineering at Fivetran

Enabling a data-driven and innovative engineering culture at Amplitude

Shadi Rostami

SVP of Engineering at Amplitude

How Estée Lauder scales strong engineering culture

Meg Adams

Executive Director of Platform Engineering at Estée Lauder

Can I take your order? Building conversational AI to improve the customer experience

Akshay Kayastha

Senior Engineering Manager at ConverseNow

Engineering resilient systems: Rescuing old treasures and unleashing modern capabilities

Marianne Bellotti

Author, Engineering Leader, Systems Geek

The Full Package: How Route architects its all-in-one post-purchase platform

Siddhartha Sandhu

Engineering Manager at Route

A historical journey in developer technologies

Mike Willbanks

CTO at Spark Labs

From Legacy to Cloud: Success stories from migrating mission-critical applications

Kishore Koduri

Senior Director of Enterprise Architecture at Ameren

Building purpose-driven engineering cultures

Jason Valentino

Head of Engineering Enablement at BNY Mellon

Modernizing Insurance Application Architecture at New York Life

Mike Murphy

Corporate Vice President and Life Insurance Domain Architect at New York Life

Innovation and Disruption: How Materialize pioneered a new era in data streaming

Arjun Narayan

Co-Founder and CEO at Materialize

Stories from an SRE: How Hans Knecht builds better developer experiences

Hans Knecht

Cloud Consultant at Knechtions Consulting (Ex: Capital One; Ex: Mission Lane)

Inside Chick-fil-A’s infrastructure recipe for a perfect customer experience

Brian Chambers

Chief Architect at Chick-fil-A Corporate

Modernizing from the Mainframe: An Exploration of Distributed Systems

Chris Stura

Director, PwC UK

IoT Standards & Data Mesh: Utility Facility App Architecture

Grant Muller

Vice President, Applications and Technology Architecture at Xylem

Relational Data Problems: Doubble Dating Application Architecture

Mattias Siø Fjellvang

CTO & Co-Founder at Doubble

From Legacy Systems to Limitless Scaling with Paycor’s Systems Engineering Fellow

Adam Koch

Systems Engineering Fellow at Paycor

How to Understand Problems & Build Better Software with Technical Leader Joe Lynch

Joe Lynch

Technical Leader

Observability in the Cloud & Dataflow Modifications with Yolanda Davis from Cloudera

Yolanda Davis

Principal Software Engineer, Data Flow Operations

Early Days at Google & Building CockroachDB with Peter Mattis

Peter Mattis

Co-Founder and CTO of Cockroach Labs

Database Benchmarking Efficiency with OtterTune’s Andy Pavlo

Andy Pavlo

Associate Professor of Databaseology at Carnegie Mellon and Co-Founder at OtterTune

Observability & Statelessness with TripleLift’s Chief Architect

Dan Goldin

Chief Architect at TripleLift

Understanding AI: PubNub CTO Stephen Blum’s Key to Faster App Development

Stephen Blum

PubNub

Building reliable systems with DoorDash's Matt Ranney

Matt Ranney

DoorDash

Real-Time Data Capturing: The Future of Fitness Technology

Paul Lawler

Head of Software at Wahoo Fitness

Building Efficient App Architecture with Alloy Automation’s Gregg Mojica

Gregg Mojica

Co-Founder and CTO Alloy Automation

Unleashing the Power of Hiring Software with Greenhouse CTO Mike Boufford

Mike Boufford

CTO at Greenhouse Software

Decoding Data Warehousing: Insights from Ken Pickering, SVP of Engineering at Starburst Data

Ken Pickering

Senior Vice President of Engineering, at Starburst Data