Before we define what a serverless database is, perhaps we should talk about what serverless means more broadly, and why there seems to be building momentum behind this general paradigm.

What is serverless computing and why is it growing?

Broadly, the term serverless refers to a development model in which an application or service is deployed to the cloud and cloud resources (compute, storage, etc.) are allocated to it automatically in near-real-time based on demand. It is “serverless” (from a developer perspective) because server management tasks such as scaling up or down have been abstracted away to be handled by the service provider.

That’s really just the tip of the iceberg, though. Whether it’s a serverless database, a serverless function, a serverless cloud data warehouse or even a custom backend to a CRM app, anything calling itself serverless should be built with the following principles in mind:

Little to no manual server management

Automatic, elastic app/service scale

Built-in resilience and inherent fault tolerance

Always available and instant access

Consumption-based rating or billing mechanism

So why is this model growing now? While the market dynamics are complex, there are two major factors that are driving us to serverless:

First, few developers actually enjoy managing their services and apps. Operations are a necessary evil, but serverless can promise a departure from these tasks or at least a method of abstracting them into the background.

Second, the economic model of the cloud is proving difficult for most everyone. Who wouldn’t want to reduce their bills from the public cloud providers down to exactly what they’ve consumed?

The emergence of the serverless computing paradigm seems to be a very natural progression, even an end state, for the move to automation and orchestration we’ve seen over the past few years. And when applied to the database, this paradigm can deliver massive value for all apps — whether serverless or not.

What is a serverless database?

Broadly, a serverless database is a managed database-as-a-service (DBaaS) offering that scales database resources up and down based on demand, and that bills users based on resource consumption.

But again, that’s just the tip of the iceberg. A serverless database should hit all of the “serverless computing paradigm” requirements discussed earlier in this article, so a more accurate definition might be: A serverless database is any database that embodies the core principles of the serverless computing paradigm:

Little to no manual server management

Automatic, elastic app/service scale

Built-in resilience and inherent fault tolerance

Always available and instant access

Consumption-based rating or billing mechanism

There are some additional, explicit principles that help define a serverless database. Data should be accurate and of high integrity, but — and of equal importance — data must also be available everywhere, and with very low latency. The very nature of serverless is inherently multi-regional: never tied to a single region and able to deliver all of this value anywhere. These four additional principles are:

Survive any failure domain, including regions

Geographic scale

Transactional guarantees

The elegance of relational SQL

When all of these elements come together we get a look at what the next generation database has become: A familiar database that’s delivered as a service, eliminates ops and reduces costs down to a count of the transactions and required storage used by your application. (Jump to this blog if you want to learn how we built a serverless sql database.)

Key serverless capabilities

Now that we’ve defined the core principles of serverless applications, let’s examine what each of these mean and how they are specifically realized in a serverless database.

1. Little to no manual server management

Serverless is quite the misnomer; it is actually a bunch of servers that are abstracted away and automated so you don’t have to manage them. The manual tasks of provisioning, capacity planning, scaling, maintenance, updates, etc, all still happen but are behind the scenes. Using them requires little to no manual intervention and very limited thought.

A serverless database eliminates the operational overhead of deployment, capacity planning, upgrading and management. It does all this without downtime and allows developers to focus on what matters – coding business logic.

2. Automatic, elastic scale

Within the serverless paradigm, elastic scale allows your service or app to consume the right amount of resources necessary for whatever your workload demands at any time. This elastic scale is automated and requires no changes to your app and will help optimize compute costs.

A serverless database allows for elastic scale for both storage and read/write transactional volumes. It will expand and contract based on workload all without interaction from a developer or ops personnel. It will scale up for a sudden spike in traffic and scale back down post-event to make sure you only pay for the compute you actually need/use.

3. Built-in resilience and inherently fault-tolerant

A serverless application will survive the failure of any backend compute instance, or other network or physical issue. This resilience allows for your service to be always available even as you upgrade.

A serverless database will survive backend failures and guarantee data correctness even when these issues happen. It can even allow you to implement online schema changes and roll software updates across multiple instances of the database, all without issue and while always remaining available. It eliminates both planned and unplanned downtime.

4. Always available and instant access

A key challenge with any serverless compute platform is not just ensuring a service is available but also that you can “wake it up” quickly whenever it has been dormant but then is all of sudden needed. Putting a non-used service to sleep allows you to save money on compute, but it should be there instantly whenever you need it again.

Your apps and services will rely on your serverless database to be always on and always available. More importantly, it should minimize any “waking up” time so that all customer requests are serviced in a timely manner.

5. Consumption-based rating or billing mechanism

A few of these core serverless capabilities help conserve compute. However, they are only as good as their built-in mechanism that tracks activity. A serverless app needs to be able to capture how much it consumes.

For a database, the two vectors of consumption are storage and transactional volume. A serverless database should capture both so that the app owner only has to pay for what they consume. Large apps may cost more than small but, no matter what, each can grow volume and the relative spend accordingly. It’s serverless, so you only pay for the resources you use, when you use them.

Defining a serverless database

The next three requirements are unique to a serverless database as the concepts of ubiquity and availability have a very profound effect on how to think about data and transactions — especially in serverless, and especially at global scale. If you agree that the serverless concept should divorce us from worrying about servers, then shouldn’t this also extend to worrying about where we run serverless?

For a serverless database the “where” presents significant problems as the speed of light encumbers the speed at which we can perform transactions, especially if we want any guarantee that data is correct. We can’t simply set up asynchronous replication to accomplish this as the serverless system is too fluid and too complex.

6. Survive any failure domain, including an entire region

Behind the scenes, a serverless platform will most likely be available across multiple regions. When you do not need to persist anything, this is relatively simple. However, if you want any sort of guarantees for data correctness and availability, it presents a significant challenge for the application. In order for a serverless database to survive the collapse of any failure domain (instance, rack, AZ, region, cloud provider, etc) it should persist multiple copies of data and then intelligently control where the data resides to avoid these failures. The placement of data should be able to be controlled, but by default automated to maximize availability.

7. Geographic scale

Within the context of a database, scale is typically thought of as the total storage volume needed, but this also extends to the necessary transactional scale. For a serverless database, scale can also be extended to geography as data is needed everywhere.

The database should automate geo-partitioning, moving data dynamically around the globe to minimize data access latency concerns. And, in more mature serverless databases, it will be able to automate the placement of data cross regions in order to help solve regional data domiciling requirements (such as GDPR).

8. Transactional guarantees

If we want transactions within a serverless database, we need to place a lot of attention on their performance across board geographies. After all, if you have a user hitting your service in Asia and another in Europe and they are both trying to access the same record, who wins? Transactional guarantees and data integrity are less complex in a single region, but we aren’t talking about a single region when we speak of the ultimate definition of serverless. Serverless knows no bounds.

9. The beauty and elegance of a relational database (SQL)

Finally, we have been talking about a database throughout all of these requirements thus far. There are many types, but the most complex to deliver is a relational database that delivers referential integrity, joins, secondary indexes, etc.

Delivering the elegance of SQL may not be a requirement of every serverless database, but it should be considered an adjunct requirement because of its importance to most of our operational workloads.

All things distributed

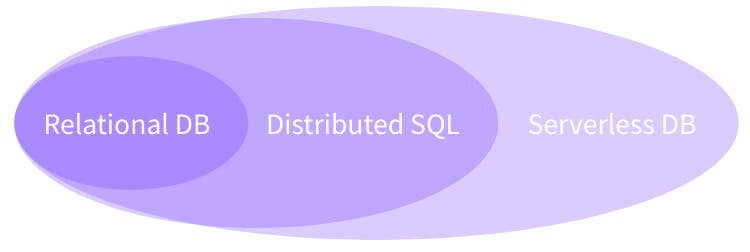

A seemingly long time ago, we wrote a post that helped define the Distributed SQL concept. Serverless is simply an extension of these same principles delivered in a way that completely eliminates operations, makes adoption easy and minimizes costs. One builds on another.

We raise this issue as the requirements above lay out a plan and guideline for what serverless database should mean and ultimately it is based on the same principles of distributed systems. Without one, you cannot have the other.

There is also a growing trend among software vendors to shift to this new approach, and many are architecting their systems to become serverless. This pattern has already been established by a few leading edge companies.

If you can tease away storage from compute, you can create a serverless environment. In an architecture where compute and storage are separate, you have compute or services that scale independently of how things are stored. They can be cycled up and down or may wait in a “hot” state, waiting for a resident. We believe this baseline architecture for serverless is right as it provides the inherent scalability and consumption-based capabilities that in part define serverless principles.

The future is familiar

Ultimately, we feel the concept of a serverless database will eventually become an implementation detail and that developers, once familiar and comfortable with this approach, won’t care. We believe the database will ultimately become a SQL API in the cloud with endpoints all over the planet, a service that no developer will need to think about. Instead, they can simply just code against it. It should be as simple as building authentication into an app with Okta. It should be like adding messaging to your app with the Twilio API.

Developers know what they want and don’t want. And we are pretty sure they don’t want ops. The world is becoming serverless…Including the database.

Additional serverless resources

Free online course: Introduction to Serverless Databases and CockroachDB Serverless. This course introduces the core concepts behind serverless databases and gives you the tools you need to get started with CockroachDB Serverless

Bring us your feedback: Join our Slack community and let us know your thoughts!

Serverless Function best practices from our documentation

Learn when to use a serverless database (and when not to).

A case study of a gaming company building on a serverless database