When Becca Taft first sat in on one of Michael Stonebraker's research group meetings at MIT, she wasn't planning to work on databases.

Her career had already taken some turns, from studying physics at Yale to working in IT consulting and later as a software engineer at Bloomberg. Then something big shifted in graduate school when she attended the group meetings held by Stonebraker, who stands as a pioneer in relational database research.

"My path to databases was definitely nonlinear,” Taft says. “It was at Bloomberg that I first encountered the challenges of working with data at scale. That experience led me to pursue a PhD at MIT, where I initially planned to study computational biology. After reaching out to Mike Stonebraker and sitting in on some of his research group meetings, however, I realized distributed databases were what I actually wanted to work on."

That decision didn't just change her research focus: It set her career path in a fascinating new direction. Today, as Director, Engineering at Cockroach Labs, Taft leads teams responsible for critical components of CockroachDB's SQL layer. Her work spans query optimization, indexing, and the systems that sit closest to how developers actually interact with data. Across it all, her focus is consistent: building systems that don't just work in theory, but hold up under real-world conditions.

This reflects a broader shift in database engineering: success is defined not by theoretical performance, but by how systems behave under real-world workloads at scale. For businesses, that translates to uptime, customer experience, and the ability to grow without re-architecture.

From academic models to production-ready systems

Taft's doctoral research focused on database elasticity, studying how distributed systems dynamically scale resources in response to changing workloads. The biggest lesson she took from that experience wasn't tied to a specific subsystem, but instead how to bridge database theory with real-world applications.

"That's actually one of the biggest gaps between academic theory and production reality,” she observes. “With an academic prototype, you don't need to think too much about handling edge cases or ensuring that existing customers don't see any regressions. In production, all of that is critical. You can't just prove something works in the common case; you have to make sure it works for every customer workload without breaking anything."

"More broadly though, the PhD taught me how to approach difficult and ill-defined problems, which is something I use constantly."

For Taft, that gap – between proving something works and ensuring it always works – defines the challenge of distributed systems. In practice, this is where many distributed systems fail: scaling a database isn’t just adding nodes, but ensuring consistent performance, predictable latency, and correctness across workloads. Closing that gap is what turns a working system into a production-ready platform.

Building a cost-based query optimizer without breaking existing workloads

One of Taft's earliest and most significant contributions at Cockroach Labs was helping build the database's cost-based query optimizer from the ground up.

"I was part of a small team of four: a manager/tech lead, a senior IC, myself – at that time still a relatively junior IC – and one other junior IC,” she recalls. “Before we really started building, we spent time learning about query optimization by reading academic papers with guidance from an optimizer expert we had hired as a contractor, and playing around with an early prototype called ‘opttoy.’ Once we started working as a team in earnest, we shipped the first version in less than a year."

A fast-growing user base made the work more complex than a typical greenfield system. "One of the key challenges,” says Taft, “was that CockroachDB already had customers, so we needed to make sure all their queries still worked and didn't see significant regressions. We also fell back to the existing heuristic planner in some cases initially."

"There were some interesting design questions too,” she continues, “like whether to build a domain-specific language (DSL) for the optimizer. Andy Kimball, who led the project, pushed for a DSL, which in hindsight has been a great decision. It makes adding each new transformation rule much easier."

Today, CockroachDB’s optimizer includes hundreds of transformation rules, reflecting a repeatable approach to solving complex problems.

"I think our approach to building the optimizer is a great example of how I like to approach hard technical problems more generally,” Taft says. “Start by understanding the theory through reading papers and learning from experts, then prototype and design, and then convert the prototype into production-ready code."

What real-world systems reveal about database performance at scale

If building systems exposes the gap between theory and reality, working directly with customers makes that gap impossible to ignore. Their real-world usage patterns are where database performance issues surface most clearly, often in ways that directly impact application latency, reliability, and ultimately revenue.

"In the early days of CockroachDB, before we had a full technical support team, I was one of the engineers handling customer problems directly,” notes Taft. “Today, our technical support engineers handle the first line of support, but when they determine that an issue needs deeper expertise from the SQL Queries team, it comes to us. That's usually either a tricky performance issue or a bug in the product."

"The main lesson is that customers will always use features in ways you don't expect, and systems behave differently than you expect at massive scale."

Leading teams that ship – and sustain – complex systems

As Director of Engineering, Taft's scope now spans multiple teams, geographies, and responsibilities. Along the way, her focus remains grounded in outcomes.

"My days are pretty varied,” she says. “At least half my time goes to meetings: 1-1s with my reports, team and project meetings, leadership meetings. Beyond that, I work with PM to plan our roadmap, read and review design docs and product briefs, write blog posts [like this one on recent CockroachDB patents], plan quality initiatives like bug bashes, and do a lot of cross-team communication and coordination."

Managing both direct and indirect teams across regions, she constantly balances delivery with sustainability. "Success ultimately comes down to shipping features that our customers value and ensuring those customers are successful,” states Taft. “That means aiming for high quality so things work out of the box, but also quickly unblocking customers when they run into issues."

"Achieving that requires working closely with product management (PM) to define a roadmap that balances forward-looking, differentiating features; specific things customers have requested; quality work like testing; and company-wide goals like performance improvements and reducing total cost of ownership."

In the constant juggling act between test triage, customer escalations, and roadmap progress, Taft makes team health a top priority. “Engineers do their best work when they feel motivated, have ownership, and are given opportunities to grow,” she says. “I try to build trust by giving the team a voice, not just in building the product but in determining what we should build and how we run the team."

Why AI workloads force a rethink in distributed systems

As AI workloads reshape application architectures, databases are evolving to support vector search and new access patterns. In distributed systems, that shift introduces deeper challenges.

"The hard part is building a high-performance, scalable vector index that works in a distributed system,” explains Taft. “Most vector indexing algorithms were designed for single-node databases where you can keep large data structures in memory and every data access is local."

"In a distributed database like CockroachDB, each hop through an index can mean a network round-trip to a different node, which makes graph-based approaches like hierarchical navigable small world (HNSW) extremely expensive. You also can't assume shared in-memory state across nodes, especially in a serverless environment where pods are spinning up and down."

The solution was to develop C-SPANN, an approach that adapts Microsoft's SPANN algorithm to work with CockroachDB's distributed, range-partitioned architecture. “Instead of a graph, it organizes vectors into partitions via K-means clustering that map naturally to our KV layer, so they can be split, merged, and distributed just like the rest of our data,” Taft says. “The number of network hops is small and predictable, and partitions at each level can be searched in parallel."

This highlights a key shift in database architecture: Supporting AI workloads requires rethinking how data is indexed and retrieved in distributed systems. Distributed vector indexing balances accuracy, latency, and network cost while operating within transactional boundaries.

"That's why you can't just bolt on vector support and call it done,” Taft continues. “A single-node database can drop in an off-the-shelf index like HNSW and get reasonable performance. But in a distributed system, you have to rethink the algorithm from the ground up to account for network latency, lack of shared memory, and the need for transactional consistency. Vectors need to be queryable alongside the rest of your data in a single transaction, not siloed in a separate system with different consistency guarantees."

Why speed and correctness aren’t tradeoffs in distributed databases

As CockroachDB expands into AI-driven workflows and agent-based systems, Taft sees continuity rather than tradeoffs.

"I don't actually think it's an either/or,” she says. “Agents require many of the same capabilities our customers are already using: strong consistency, high availability, multi-region deployments, and the ability to scale without re-architecting. CockroachDB is well suited to agent workloads precisely because of the resilience and correctness guarantees we've already built."

"What's new is how agents interact with the database. Agents are becoming first-class users alongside humans, and they need things like structured interfaces with deterministic outputs, scoped permissions so they can't exceed their intended access, and full auditability so teams can track what an agent did and why."

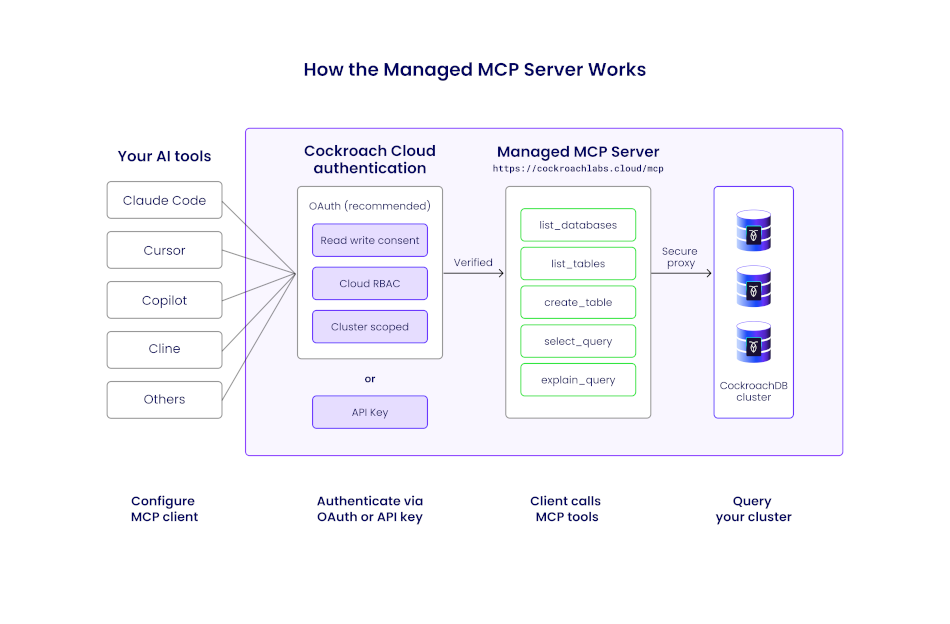

For example, Taft points to the Managed MCP Server that gives agents a secure, cloud-hosted way to connect to CockroachDB with those guardrails built in. It's read-only by default, uses service account authentication with Cloud RBAC, and blocks destructive operations even when writes are enabled.

This Managed MCP request flow illustrates how an AI agent interacts with CockroachDB Cloud through the managed MCP endpoint, without introducing a new data-plane access path.

"We're also using agents internally to increase our own speed of development, which is helping us ship AI-adjacent features faster without cutting corners on quality,” she adds. “Ultimately, the properties that make CockroachDB reliable for traditional workloads are the same ones agents need to operate safely at scale, so we don't see a tension between moving fast on AI and maintaining our correctness guarantees."

Building systems – and a career – that last

Taft has been at Cockroach Labs for over eight years, growing alongside the company as both an engineer and a leader.

"When I was exploring opportunities after my PhD, Cockroach Labs stood out for a few reasons,” says Taft. “The technology was interesting and closely related to my research, which made it a natural fit. But what really sold me was the culture. It was clear the founders had made that a priority from day one, with strong values around transparency, respect, and balance. The engineers I met were both deeply technical and genuinely kind."

"What's kept me here is that the company has continued to invest in my growth. I started as an individual contributor on the SQL Optimizer team, became a tech lead, then moved into engineering management, and now I'm a director. The company has also supported my continued involvement in the academic research community, which is something I value. Over eight years later, I'm still learning and still excited about the work."

Despite everything she accomplishes at Cockroach Labs, yes Becca Taft DOES have a life apart from engineering. In the off-hours, she moves to a different rhythm.

"Outside of work, I'm part of a rowing team and I have a two-year-old son, so life is pretty full!” she emphasizes. “One of the reasons I've stayed at Cockroach Labs as long as I have is that the company genuinely supports balance. Generous PTO, strong parental leave, flexibility around how and where you work. Those things were important to me when I joined, and the company has consistently delivered on them."

It shows why systems that hold up in the real world aren't just about resilient data architecture: They're also about sustaining the people behind it.

See How CockroachDB Handles Production at Scale: Explore CockroachDB's architecture and capabilities with a free trial, or contact our team to discuss how distributed SQL supports your most demanding workloads.

David Weiss is Senior Technical Content Marketer for Cockroach Labs. In addition to data, his deep content portfolio includes cloud, SaaS, cybersecurity, and crypto/blockchain.