[For CockroachDB's most up-to-date performance benchmarks, please read our Performance Overview page]

Correctness, stability, and performance are the foundations of CockroachDB. Today, we will demonstrate our rapid progress in performance and scalability with CockroachDB 2.1.

CockroachDB is now 50x more scalable than Amazon Aurora at less than 2% of the price per tpmC. And unlike Aurora and other databases that selectively degrade isolation levels for performance, CockroachDB can achieve massive scale while maintaining serializable isolation, protecting your data from fraud and data loss. Read on to see benchmarked metrics that demonstrate that CockroachDB can provide customers an ultra-resilient and highly available database at massive scale.

TPC-C: Benchmarking for OLTP Databases

Cockroach Labs measures performance through many diverse tests, including the industry standard OLTP benchmark TPC-C, which simulates an ecommerce or retail company. In April we introduced our readers to TPC-C, publishing our first TPC-C benchmark metrics detailing our throughput performance as measured by transactions per minute (tpmC). We expanded upon this blog by publishing TPC-C results at scale. Finally, scaling is important, but at what cost? We published a follow-on cost comparison that showed that CockroachDB not only scales better than Amazon Aurora, but it does so at a cheaper price. In the following post we will expand upon these three foundational dimensions and show that CockroachDB 2.1 is now 50x more scalable than Amazon Aurora.

Updated Metrics

Benchmarking Scale and Transactional Throughput with TPC-C

CockroachDB 2.1 can hit an incredible 631K tpmC at TPC-C 50K! In fact, we suspect we could easily push CockroachDB 2.1 even further as we achieved these results at 98% of the max possible efficiency for TPC-C 50k.

We compared our unofficial TPC-C results to Amazon Aurora RDS unofficial TPC-C results from AWS re:Invent 2017. We also used Aurora’s SIGMOD 2017 paper for additional information as to their test setup and load generator.

As such, based upon their last published metrics, CockroachDB is now 50 times more scalable than Amazon Aurora (a 5x increase from our CockroachDB 2.0), supporting 25 billion rows and more than 4 terabytes of frequently accessed data.

Unlike Amazon Aurora, CockroachDB achieves this performance in serializable mode, the strongest isolation mode in the SQL standard. Like many other databases, Aurora selectively degrades isolation levels for performance, leaving your business susceptible to fraud and data loss.

KV: Another Way to Benchmark Scale

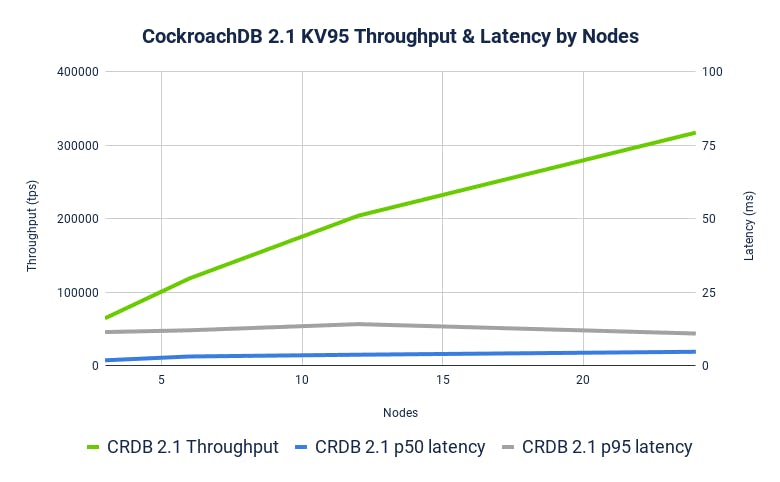

Another way to measure scale is to compare what happens to throughput and latency as we increase the number of nodes. We ran a simple benchmark named KV (95% point reads, 5% point writes, all uniformly distributed) on an increasing number of nodes to demonstrate that adding nodes increases throughput linearly while holding p50 and p99 latency constant.

We used KV in addition to TPC-C because it’s easier to demonstrate performance as nodes increase. TPC-C is instead designed to increase performance as warehouses increase, which is a related but orthogonal concept to adding nodes to a cluster.

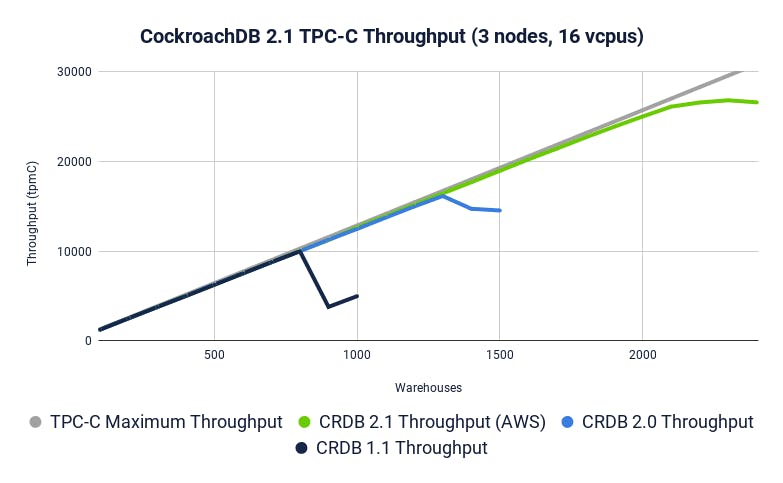

Improving Overall Efficiency: 3-Node Performance

While we have many customers pushing CockroachDB to scale (see Baidu), we recognize that many deployments don’t require global scale. This is why it’s important for us to push our performance on a 3-node cluster to efficiently provide value to all customers. Gains in efficiency directly translate to cost-savings as fewer resources (e.g. nodes) are needed to support the same throughput. Any efficiency gains we make on a 3-node cluster affect every workload--not just those customers already operating at global scale.

We’ve improved our 3-node performance by increasing max supported warehouses from 1,300 to 2,300 warehouses in 2.1. This translates to an increase of 76.9% in TPC-C warehouses. CockroachDB 2.1 saves you money by efficiently providing more throughput for the same number of resources.

Cost: CockroachDB 2.1 costs only 1.8% of Amazon Aurora

We’ve improved our cost metrics as well. Because of efficiency improvements, CockroachDB 2.1 only needs 18 nodes to run TPC-C 10K, down from requiring 30 nodes in CockroachDB 2.0. This translates to a cost savings of 66%!

CockroachDB 2.1 costs only 1.8% of Amazon Aurora per tpmC

TPC-C 10K*

* Amazon Aurora has not attempted TPC-C 50K so we kept our comparisons to 10K (and provided their highest observed tpmC when, in fact, they only achieved 9,406 tpmC)

** Amazon Aurora did not publish their iOPS number, a key lever in their cost model. As such we provided a range of costs based on iOPS ranging from 10k - 30k).

Another way to conceptualize these cost savings is that CockroachDB 2.1 can now achieve TPC-C 2,200 on a 3-node cluster (as opposed to only 1,300 in 2.0). This means that we’ve improved our price per performance by the same 66% (from $3.08 to $1.85) on a 3-node cluster.

Summary

CockroachDB can achieve massive scale while protecting your data from fraud and data loss.

In fact, CockroachDB 2.1 uses a more sophisticated fraud protection (Serializability) than Amazon Aurora and still out-scaled Aurora by 50x at less than 2% of the price per tpmC.

Click here to learn more about how CockroachDB can provide you a managed cluster that can provide a hassle-free way to achieve these benefits and more.

Note: We recently transitioned from collecting CockroachDB performance numbers in GCE to AWS. In a forthcoming blog post we will dive into the reasons behind this decision--stay tuned!