Today, we are thrilled to announce the release of CockroachDB 1.1. We’ve spent the last five months incorporating feedback from our customers and community, and making improvements that will help even more teams move to CockroachDB.

We are also excited to share success stories from a few of our customers. Baidu, one the world’s largest internet companies, shares how they are using CockroachDB to automate operations for applications that process 50M inserts and 2 TB of data daily. Heroic Labs, a software startup, shares how they simplified deployment of their gaming platform-as-a-service by packaging CockroachDB inside each server.

CockroachDB 1.1 focuses on three areas: seamless migration from legacy databases, simplified cluster management, and improved performance in real-world environments.

Quickly migrate your data… and code.

As we approached the 1.1 release, we wanted to understand the sticking points teams had when migrating from traditional RDBMS and NoSQL databases to CockroachDB. We identified issues around data transfer and application migration, and we’ve taken major steps to improve the experience for both.

First let’s talk about your data. We launched CockroachDB with basic import functionality that mirrored what Postgres offered. This was fine for small data sizes but as we saw customers with terabytes and, in some cases, petabytes(!) of information looking to move to our database we had to deliver much, much faster facilities for supporting data import.

We decided to take the scale-out approach used by our distributed backup and restore functionality and extend it to CSV imports. This feature can reduce the time it takes to migrate large data sets to CockroachDB from hours to minutes.

This release also helps your team migrate both apps and expertise to CockroachDB by improving its SQL coverage and implementing postgreSQL features that enable us to better support ORMs like Hibernate and ActiveRecord. We’ve added an array type to give you more options for working with lists and improving the performance of certain queries by keeping related data together.

Take control of your global clusters

One of the reasons teams gravitate towards CockroachDB is it was built from the ground up to automate the heavy operational lifting like sharding, recovery, and rebalancing. However, operators still need visibility into what’s going on in their cluster and controls to prevent bad queries from degrading performance. With our 1.1 release, CockroachDB now gives operators real-time visibility and control of ongoing cluster activity. Let’s see how.

Jobs Table in the Admin UI

First we are introducing the jobs table, your hub for seeing all of the long-running work happening in your cluster. This includes things like schema changes, CSV imports, and CockroachDB Self-hosted backups and restores. The jobs table lets you see what is happening, who triggered it, and estimated time remaining. You can now examine how new jobs are impacting the performance of the cluster and utilize new commands to cancel, pause, and resume backups and restores to keep your cluster healthy.

SHOW QUERIES

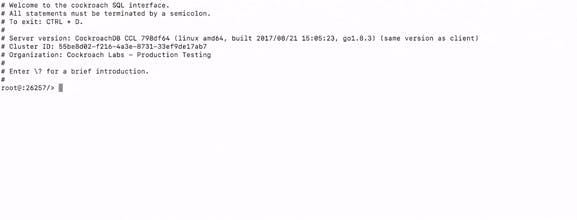

CockroachDB now provides greater visibility into running queries using the SHOW QUERIES command via the SQL CLI. Additionally, there are new SQL commands to cancel queries that match certain criteria in order to quickly check cluster capacity or prevent an errant query from doing too much damage.

The combination of the jobs table and better query management provide the necessary tools to help you understand and manage your cluster. This is only the beginning; the suite of tools will grow with each subsequent CockroachDB release.

Improved performance for cloud environments

CockroachDB 1.1 has tightened latency and improved throughput across a variety of performance benchmarks. When tested against a high concurrency key-value workload, we saw our average latencies drop below 5ms (a 13% improvement, with 95-percentile latencies falling 11% to 17ms) and a modest throughput increase to 44k queries per second (a 14% improvement).

For enterprise users, we’ve made tremendous improvements to our distributed backup and restore capability, which can now restore a database 17x faster than it did in the 1.0 release.

Finally, we’ve laid the groundwork for a robust performance testing infrastructure, continued to focus on OLTP performance with an emphasis on TPCC workloads, and tested clusters of up to 128 nodes. We are improving performance in every release, and will share more about this topic in the months ahead.

--

CockroachDB 1.1 is the next step in a long journey to transforming the way the world works with data. This post covered updates around migration, monitoring, and performance, but this is a small sample of what we delivered in the 1.1 release. Check out the release notes to see the full list. If you want an early look into how 1.2 is shaping up, checkout our public roadmap.

You can download the latest version of CockroachDB here. Let us know what you think! We’re looking forward to hearing your feedback and success stories.

Illustration by Rebekka Dunlap