Building a startup for global scale from day one isn’t easy – especially if you’re on the infrastructure team. But with forward-thinking approach, the team at City Storage Systems has built a back end that works across regions and clouds, at global scale, while significantly reducing manual labor and the opportunities for human error to throw a wrench into the works.

Rasmus Bach Krabbe (engineering manager) and Frederick Stenum Mogensen (software engineer) spoke at Cockroach Labs’s recent customer conference RoachFest about City Storage Systems’s journey using CockroachDB and Kubernetes for their OLTP workloads, and how they were able to reduce their own workload while building a powerful distributed application.

What is City Storage Systems?

City Storage Systems is a global startup in the real-estate and foodtech space. The company buys “dilapidated” – Krabbe’s words – real estate all over the world, refreshes it with state-of-the-art industrial kitchen equipment, and provides software and other services to facilitate restaurant entrepreneurs using these facilities to operate delivery-only food businesses.

But City Storage Systems isn’t just about these “ghost kitchens.” Some of their software is also available to any restaurant owner. For example, Otter, a SaaS restaurant operating system with solutions for everything from order management to analytics, is a City Storage Systems product.

According to Krabbe, City Storage Systems is using CockroachDB for “the bulk of our OLTP load. We have other databases like Postgres, but [CockroachDB] is the primary database for our most critical workloads.”

And although the internal infrastructure storage team Krabbe and Mogensen work on is small, it is responsible for what Krabbe describes as “a fleet” of CockroachDB clusters, many of them multi-region.

Getting started with CockroachDB and Kubernetes

From day one, City Storage Systems knew they needed a primary database that offered high availability and support for multi-region and multi-cloud deployments to support their global ambitions.

They also knew they needed a database that could speed up the development process by offering strongly consistent storage. Maintaining strong consistency across multiple regions or even multiple clouds isn’t easy, so a database that could take those problems off of developers’ plates was an important requirement.

They chose to build their application on Kubernetes for some of the same reasons: it facilitates high availability by automating application development and restarts, it’s portable and doesn’t lock them into a specific cloud vendor, and it can speed up development because many devs are already familiar with it, and because it can be run on laptops for fast testing and iteration.

CockroachDB is built to work on Kubernetes, and that compatibility also made the development process smoother than it might otherwise have been.

However, that doesn’t mean everything was easy! Their initial cluster provisioning process involved using a very simple Helm chart with a StatefulSet to deploy k8s and CockroachDB clusters across three regions. This system worked pretty well, but it also involved a lot of manual work. For each cluster, they had to manually initialize the cluster, create admin users, create the customer databases, create the customer users, grant permissions, etc.

The infrastructure storage team is relatively small, and they knew that this labor-intensive approach would become problematic in the long run. Additionally, any process that involves manual labor is also subject to human error. “We all know that when there’s a lot of manual steps, someone is going to forget one, or do a typo and then users will have the wrong name forever – stuff like this will happen,” says Mogensen.

The company also faced similar challenges in “day 2” operations of these clusters. For example, Mogensen says, they typically initialized clusters with very small disks to avoid spending money on storage they didn’t yet need. But as usage increased, the clusters needed to scale up, and Kubernetes StatefulSet disk size is an immutable field, so they couldn’t just change it. Instead, they deleted the StatefulSet with a flag to keep its associated pods running, and then redeployed a right-sized StatefulSet and rolled the old pods over to the new StatefulSet.

Again, this system worked, but it was labor-intensive and left the door open to potentially-costly human errors. If, for example, someone executing this process ever forgot the --cascade=orphan flag when deleting the initial StatefulSet, all of the associated pods (and with them, the entire CockroachDB cluster) would have been deleted too. They didn’t ever make this mistake in production, Mogensen says, but the possibility was certainly there.

Similar issues existed when scaling in or out horizontally. There were too many manual steps and processes for such a small team charged with managing a global-scale application.

Leveling up: building a custom Kubernetes operator

Many of these problems point in the same direction: using a Kubernetes operator to streamline and automate the process of deploying and managing new clusters. Using a Kubernetes operator would give City Storage Systems declarative management of resources such as databases and users, and a way to execute many of the manual processes from their initial setup as code, taking work (and the possibility of human error) off of the team’s plate.

There was an existing CockroachDB Kubernetes operator, but in 2021, it came with a number of limitations that were dealbreakers for City Storage Systems. For example, at the time it ran on GKE and hadn’t been tested on other cloud Kubernetes engines such as Amazon EKS. (Note: the operator does support EKS now).

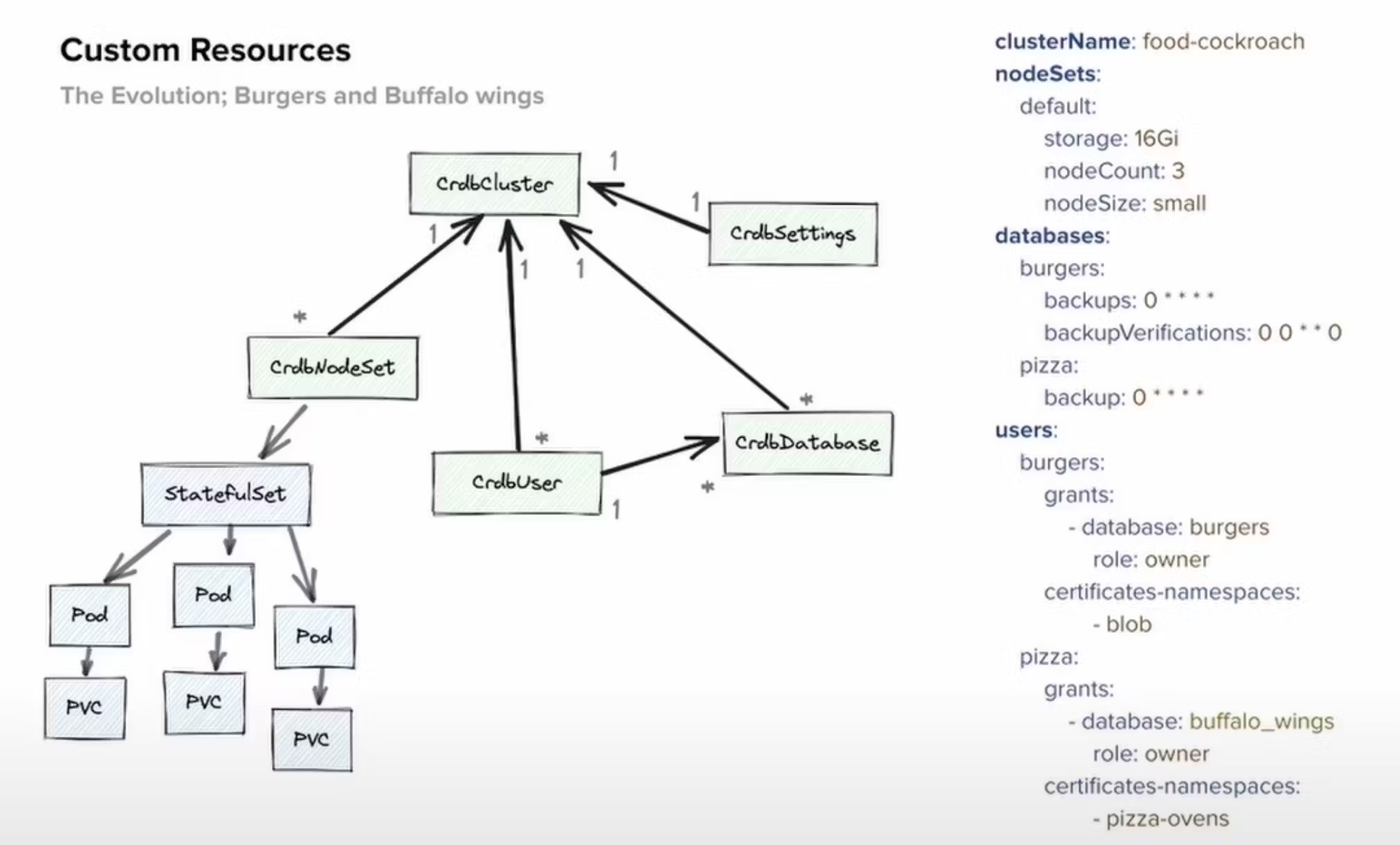

So, City Storage Systems decided to build their own custom operator. This proved to be a fairly quick process, according to Krabbe – they started in the second half of 2021, and the operator was in use in production before the end of that year. What they produced is depicted in the design diagram below (architecture on the left, a sample of what a yaml file defining resources and hierarchies on the right):

This approach freed up a lot of the team’s time by simplifying and automating a variety of things – check out the full video recording of their talk for more details. But let’s talk about one example in particular: migrations.

Migrating to a new cloud

People who work with databases usually have a healthy fear of migrations. Even when they’re “easy,” they’re never easy. But when the City Storage Systems team was tasked with moving to a different cloud, the migration “turned out to actually be a pretty smooth operation with the combination of CockroachDB and the operator we built,” Krabbe says.

On the database side of things, CockroachDB is built to be both cloud-native and cloud agnostic – it can be run on any cloud, across multiple clouds, on-prem, or in almost any kind of hybrid deployment. So moving to a different cloud didn’t mean having to move to a different database or having to change anything in their schema.

On the Kubernetes side, the operator that they had built was intentionally designed to abstract away a lot of the little cloud provider specifics. That meant that when it came time to migrate, Krabbe and his team didn’t have to do a lot of manual code refactoring to make things work on their new cloud.

Long story short: CockroachDB, in tandem with a custom Kubernetes operator, is delivering exactly what City Storage Systems needs: a highly-available, strongly consistent database with multi-region and multi-cloud support that remains performant at scale.

But that’s not the full story, In fact, it’s really just the tip of the iceberg. For more details, and to learn about the next phase of City Storage Systems’s work with CockroachDB, check out the full recording of their RoachFest ‘23 talk: