The 2021 Cloud Report stands on benchmarks. Now in its third year, our report is more precise than ever, capturing an evaluation of Amazon Web Services (AWS), Microsoft Azure (Azure), and Google Cloud Platform (GCP) that tells realistic and universal performance stories on behalf of mission-critical OLTP applications.

Our intention is to help our customers and any builder of OLTP applications understand the performance tradeoffs present within each cloud and within each cloud’s individual machines. Perhaps your current configuration isn’t the most cost effective. Or you are looking to build a net-new application and want to see which provider has the fastest network latency. Maybe storage has been an issue in the past and you are looking for new solutions. Regardless of your motivation, the report is designed to help you achieve your goals and develop the best architecture for your specific needs.

The 2021 Cloud Report is developed by a team of dedicated engineers and industry experts at Cockroach Labs. It compares AWS, Azure, and GCP on micro and industry benchmarks that reflect critical OLTP applications and workloads.

This year, we assessed 54 machines and conducted nearly 1,000 benchmark runs to measure:

CPU Performance (CoreMark)

Network Performance (Netperf)

Storage I/O Performance FIO)OLTP Performance (Cockroach Labs Derivative of TPC-C)

--

Highlights

1. On all things throughput, GCP is king

GCP delivered the most throughput (i.e. the fastest processing rates) on 4/4 of the Cloud Report’s throughput benchmarks: network throughput, storage I/O read throughput, storage I/O write throughput, and maximum tpm throughput – a measure of throughput-per-minute (tpm) as defined by the Cockroach Labs Derivative of TPC-C.

For the first time in our three years of benchmarking the clouds, GCP delivered the highest amount of raw throughput (tpm) on the derivative TPC-C Benchmark, a simulated measure of the transactional throughput of a retail or ecommerce company. This win is made all the more exciting after GCP’s third place finish in 2020.

And for the third year in a row, GCP won the Network Throughput benchmark, delivering nearly triple the throughput of either AWS or Azure. Notably, GCP’s worst performing machine for Network Throughput outpaced both AWS and Azure’s best performing machines.

2. Competition for most powerful CPU processor heats up between Intel, AMD, and Amazon’s Graviton2

As we evaluated CPU across each of the machines, it became apparent that what’s inside each machine matters. We evaluated each cloud’s CPU performance using the CoreMark version 1.0 benchmark. CoreMark is open-source, cloud-agnostic, and tests against various real-world workloads like list sort and search. We reported the average number of iterations/second for both the single-core results as well as the 16-core results.

On the single-core runs, Intel swept the board, with 3/3 winning machines running Intel processors. But when we looked at performance on the 16-core benchmark, none of the winning machines ran Intel processors. In fact, the AWS custom-built Graviton2 Processor, which uses a 64-bit ARM architecture, edged out GCP and Azure’s winning machines, both of which ran AMD processors.

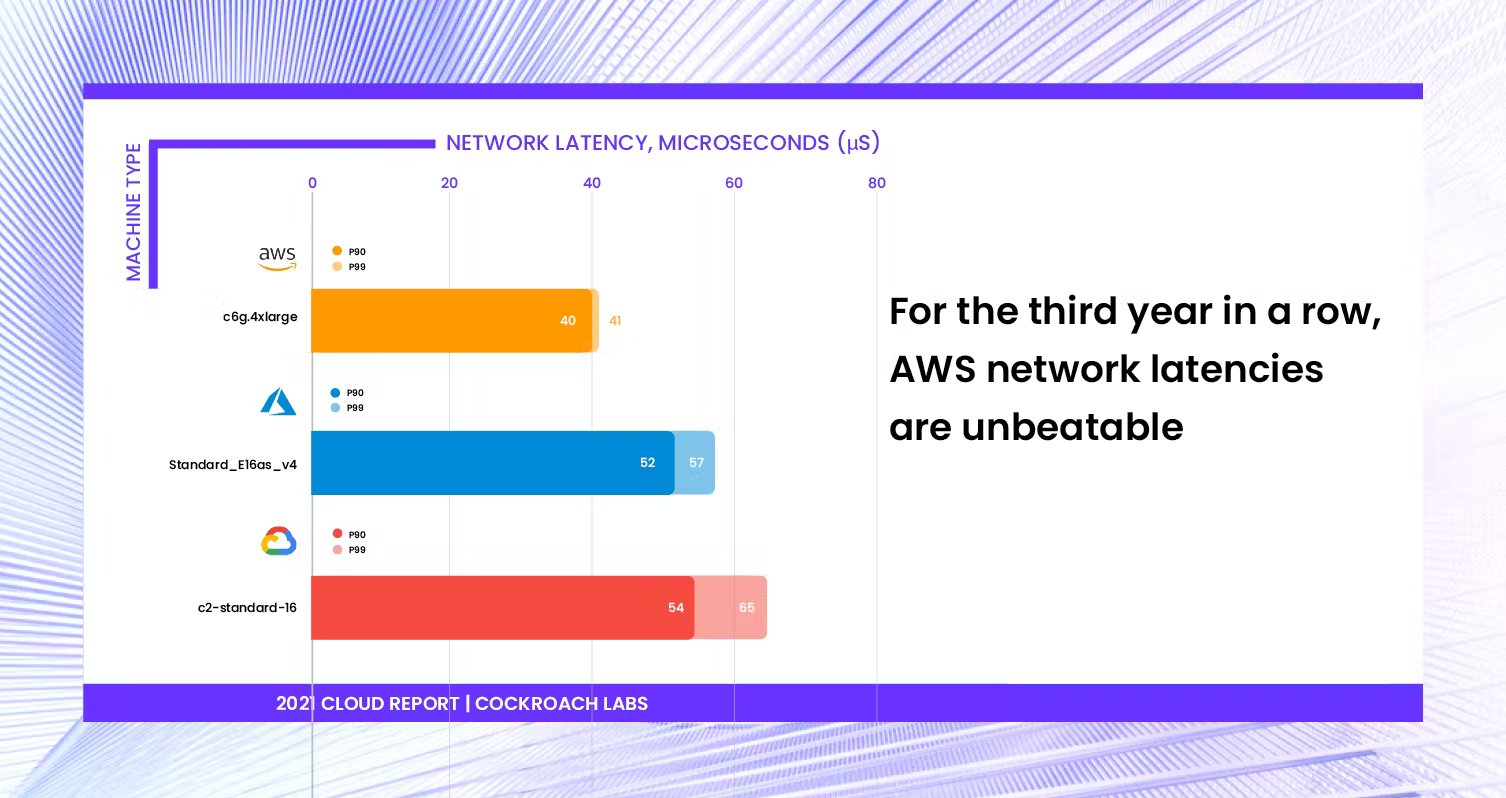

3. AWS network latencies are unbeatable

AWS has performed best in network latency for three years running. Their top-performing machine’s 99th percentile network latency was 28% and 37% lower than Azure and GCP, respectively. We do note that when it comes to placement policies, it is important to keep in mind possible randomness in the physical distance between instances and the correlated effects on network latency.

4. When more expensive “advanced” disks are worth the expense

Each of the clouds offers what we designated an “advanced disk” – a more expensive disk for applications and workloads that require higher performance: AWS’s io2, Azure’s ultra disk, and GCP’s extreme-pd.

Azure’s ultra-disks are worth the money: Azure’s ultra disk was a strong competitor this year, in use in each of Azure’s first-place finishes. In many of our benchmarks, machines running ultra disk demonstrated performance improvements commensurate to or better than their estimated price increase.

AWS’s advanced io2 disks deliver low latency: Overall, AWS machines with the advanced io2 disks averaged 51% lower latency than the machines with general purpose disks, so if latency is of critical importance for your application or workload, we recommend evaluating io2 disks.

GCP’s general purpose disk matched performance of advanced disk offerings from AWS and Azure: GCP’s extreme-pd disk was unavailable to us at the time of testing, so it was notable how well GCP’s general purpose (pd-ssd) disk performed against Azure and AWS’ advanced disks. In fact, GCP’s top-performing machine (n2-standard-16 / pd-ssd) for storage I/O read and write achieved only 5% fewer read IOPS than Azure and AWS’s top-performing machines.

For overall OLTP performance, advanced disks may not be necessary: In our benchmark simulation of real-world OLTP workloads, cheaper machines with general purpose disks won for both AWS and GCP. The Cockroach Labs Derivative of TPC-C is a compute and memory intensive workload, and while it values storage I/O performance, we found the benchmark did not drive sufficient load at the storage I/O level to prove the value of io2 and ultra disks. As a result, memory- and compute-optimized machines thrived, and storage-optimized machines with advanced disks were underutilized.

To improve OLTP performance, we found it is better to spend on more nodes, memory, and better CPUs. Machines with advanced disks could be more appropriate for heavier read or write tasks, and critical workloads with demanding latency requirements.

Methodology & Open Source Reproduction Steps

As in previous years, we have made everything required for reproduction — steps and resources, scripts, configurations, and instructions — available in an open source repository. Our goal is to ensure these resources will always be free and easy to access. We encourage you to review the specific steps used to generate the data in this report.

Note: if you wish to provision nodes exactly the same as we do, use this link to access the source code for Roachprod, our open source provisioning system.

What else is in the report?

The 2021 Cloud Report includes detailed results and analysis, an exhaustive list of individual machine performance, more details on OLTP performance, and the full results of the microbenchmarks for:

CPU Performance

Network Performance

Storage I/O Performance

OLTP Performance

Each year there is an overall “winner” of the report based on the cloud’s ranking for each of the respective benchmarks. Download the report to see who was declared this year’s winner, and to better understand how each cloud and its machines performed, on each of the benchmarks.