Groww is India’s #1 stock broker with 16 million active users and $11 billion in market cap. Founded in 2017 by four ex-Flipkart employees – Lalit Keshre, Harsh Jain, Ishan Bansal and Neeraj Singh – Groww provides a simple, clutter-free and lightning-fast investment platform. What started as a simple mutual fund investing platform, has today become a financial super app trusted by one out of four investors in India.

Groww serves high-concurrency, latency-sensitive traffic across critical user journeys. Market activity never pauses, and the database tier is expected to remain available at all times: during peaks, failures, and rapid growth.

As Groww evolved its platform architecture, the database layer needed to do more than “scale up.” It had to support operational isolation and elasticity while remaining strongly consistent. These capabilities are all essential for the user experience of Groww’s customers, who depend on it to buy and sell stocks, trade futures and options, and invest in mutual funds.

When CockroachDB became the foundation for that expansion, the next question was execution: How to migrate from a managed MySQL cloud instance to CockroachDB without a disruptive maintenance window – and with a clear rollback plan?

In this article we walk you through a production database migration process, step by step. We explain how CockroachDB’s in-house migration suite, MOLT (Migrate Off Legacy Technology), enabled a low-risk, near zero-downtime transition from MySQL to CockroachDB through staged data movement, continuous replication, and built-in verification.

Migration Goals for a Production MySQL-to-Distributed-SQL Transition

Groww’s migration requirements were clear and non-negotiable:

Minimal application change: adopt CockroachDB with limited refactoring in the application layer.

Near-zero downtime: avoid dump-and-restore style cutovers that require extended application downtime.

Predictable rollback: retain the ability to revert to MySQL for a defined period after cutover.

Operational confidence: track replication progress and validate data correctness using explicit verification checkpoints.

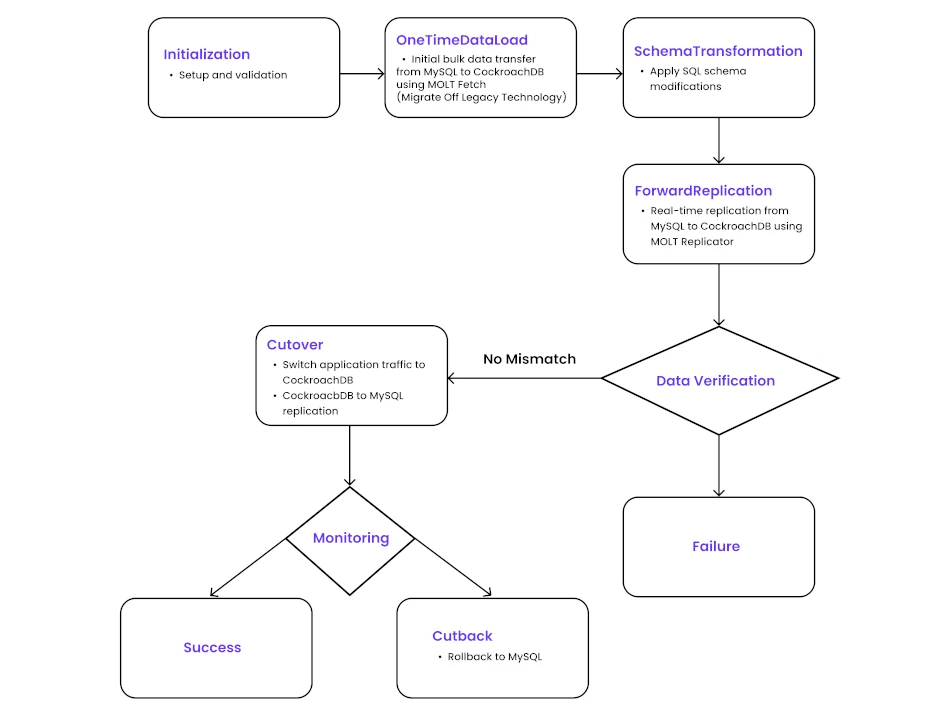

Staged MySQL-to-distributed-SQL migration strategy

The migration was approached as a staged transition rather than a single cutover event. Because the Groww application runs continuously and cannot tolerate extended downtime, MySQL and CockroachDB were kept active in parallel while the transition was carried out.

The first step was preparing the application for CockroachDB with minimal changes. This largely involved updating MySQL-specific SQL to PostgreSQL-compatible syntax and making a small number of schema and access-pattern adjustments to align with distributed SQL behavior.

Data movement was then handled in phases. An initial bulk load established the baseline dataset on CockroachDB, after which ongoing changes were continuously synchronized while MySQL continued to serve production writes. This allowed the target system to stay closely aligned with the source without interrupting live traffic.

Read traffic was subsequently routed to CockroachDB to validate query behavior and performance under real production load. Once both systems were fully in sync and data consistency was confirmed, application writes were switched to CockroachDB. After the cutover, changes continued to be propagated back to MySQL for a defined period, ensuring that traffic could be redirected back if issues were encountered during the early stabilization phase.

Minimal application changes for distributed SQL compatibility

The first step was to make the application compatible with CockroachDB’s PostgreSQL-compatible SQL layer. This required only a small set of focused changes, allowing the existing application logic to remain largely unchanged while adhering to distributed database best practices. The main areas addressed were:

Primary key design: sequential primary keys were replaced to avoid write concentration and contention in a distributed environment.

Indexing patterns: indexes based on monotonically increasing values were reviewed and adjusted to prevent hotspots under sustained write load.

SQL compatibility: MySQL-specific SQL syntax and behaviors were updated to PostgreSQL-style equivalents where required.

Executing the staged transition with MOLT

With the overall strategy in place, the migration was carried out using CockroachDB’s MOLT tooling. MOLT provided a structured way to handle a one-time data move, keep data synchronized while production traffic continued, and verify consistency at key points in the transition.

Loading the existing dataset

The migration began with a one-time data load from MySQL into CockroachDB using MOLT Fetch. This moved the bulk of the data upfront while MySQL continued serving production traffic and established the starting point for synchronization.

molt fetch \

--source "mysql://<user>:<password>@<mysql-host>:3306/<database>" \

--target "postgresql://<user>:<password>@<crdb-host>:26257/<database>" \

<options>As part of this step, Fetch records the MySQL GTID (Global Transaction Identifier) position corresponding to the snapshot. Since the one-time load can run for an extended duration, MySQL GTID retention was temporarily increased to ensure this GTID remained available when replication was started.

Keeping data synchronized during the transition

After the one-time load completed, MOLT Replicator was used to continuously apply MySQL changes into CockroachDB. MySQL remained the write source, while CockroachDB stayed up to date through replication.

Replication was started from the GTID captured during the Fetch phase:

replicator mylogical \

--sourceConn "mysql://<user>:<password>@<mysql-host>:3306/<database>" \

--targetConn "postgresql://<user>:<password>@<crdb-host>:26257/<database>" \

--defaultGTIDSet "<gtid-from-molt-fetch>" \

<options>Replication progress was monitored to determine when both systems were fully aligned.

Validating reads before switching writes

With replication in place, application read traffic was routed to CockroachDB first. This allowed query behavior and performance to be observed under real production load while writes continued on MySQL. Any remaining adjustments could be made before introducing CockroachDB into the write path.

Cutover, verification, and rollback window

Once replication was fully caught up and read traffic was stable, writes were switched to CockroachDB after a short drain window. Around this transition, MOLT Verify was used to compare data between MySQL and CockroachDB and confirm consistency at key checkpoints.

molt verify \

--source "mysql://<user>:<password>@<mysql-host>:3306/<database>" \

--target "postgresql://<user>:<password>@<crdb-host>:26257/<database>" \

<options>After cutover, changes were also propagated back to MySQL using MOLT Replicator for a defined period. This ensured that if Groww encountered issues after switching writes, traffic could be redirected back to MySQL without losing changes made during that window.

Production performance after cutover

The following metrics reflect observations from one of the actual production workloads. Read latencies remained largely comparable between MySQL and CockroachDB, while write latencies showed a modest increase, which is expected in a distributed SQL database where commits require consensus across replicas before being acknowledged.

Response time comparison

The migration was executed against a large production dataset totaling ~2 TB, with the three largest tables accounting for the majority of the volume. These included tables of approximately 120 GB, 750 GB, and 930 GB, representing some of the most active and critical data in the system.

Architectural lessons from a staged production migration

This database migration showed that moving a live MySQL workload to CockroachDB does not require a disruptive cutover or major application changes.

By preparing the application upfront and separating data movement from traffic changes, MySQL and CockroachDB were able to run in parallel for most of the transition. A one-time data load followed by continuous replication kept the systems aligned, while routing reads first provided early validation under real production traffic. MOLT made it possible to execute this approach in a predictable way, with clear visibility into replication progress and data consistency, and the final cutover was limited to a short, controlled window. (Note: Visit here to learn more about why Groww chose CockroachDB.)

Beyond the technical advantages of this approach, Groww also realized key business benefits from CockroachDB’s efficient migration path. This staged, near-zero-downtime migration meant Groww avoided the revenue and reputational risk of a prolonged maintenance window in a 24/7 market. By validating a 2TB production workload under live traffic before switching writes, they protected the customer experience during high-stakes trading activity, ensuring continuity for millions of latency-sensitive transactions. The defined rollback window also reduced operational risk, giving leadership confidence to move quickly without betting the business on a single cutover event.

Ultimately, CockroachDB’s ease of migration and built-in tooling accelerated Groww’s path to ROI by minimizing disruption, preserving user trust, and enabling a more resilient, always-on platform that can scale with market growth.

Try CockroachDB Today

Spin up your first CockroachDB Cloud cluster in minutes. Start with $400 in free credits. Or get a free 30-day trial of CockroachDB Enterprise on self-hosted environments.

Sourav De is an experienced software engineering leader with nearly 12 years in the technology industry, currently serving as a Software Architect at Groww, where he designs and implements scalable backend systems and drives architectural excellence across key product domains. Over his career, he has built robust, high-traffic platforms across multiple senior engineering roles, combining deep expertise in backend development, distributed systems, and cloud technologies with a practical, systems-first approach to solving complex product challenges and mentoring teams, while delivering resilient, maintainable solutions that support rapid growth and user scalability.

Vittal Pai is a technology leader and enterprise solutions expert with deep experience in distributed systems, databases, and cloud-native architectures. He currently serves as a Staff Enterprise Solutions Architect at Cockroach Labs, where he works with enterprises to design resilient, scalable data platforms for mission-critical applications. Earlier in his career, he was an R&D Engineer at IBM, contributing to advanced technology development and earning two patents. Vittal brings a strong blend of research-driven engineering expertise and strategic customer engagement, helping organizations adopt modern, future-ready architectures.

Paresh Saraf is a seasoned technology leader with over 15 years of experience in distributed systems, data platforms, and cloud technologies. He currently serves as Senior Manager, APAC Customer Engineering at Cockroach Labs, where he partners with enterprises to drive adoption of cloud-native, distributed SQL architectures. Throughout his career, Paresh has held diverse technical and leadership roles across global technology organizations, combining deep expertise in databases, cloud platforms, and enterprise transformation with strategic insight to help businesses design and implement resilient, high-performance, future-ready technology solutions.