It’s not easy for a startup to take on giants like the major cloud storage providers.

Yet, Storj is doing it. The company has built a globally distributed enterprise-ready object storage platform that’s S3 compatible – no small feat in and of itself. But perhaps more importantly, it actually outperforms S3 in several areas, achieving a goal that CTO Jacob Willoughby, who spoke at CockroachDB’s RoachFest 2023 conference, once called “impossible.”

What is Storj?

The quick pitch for Storj is that it’s like AirBnB for data storage. “Most of the storage around the world is under-utilized,” Willioughby says. There are millions and millions of internet-connected storage drives all over the world, and most of them are less than half full. Storj uses that excess capacity and turns it into a cohesive storage layer, which in turn enables it to offer global cloud storage for 80-90% less cost than the big players, with a much smaller carbon footprint due to eliminating the need to build, maintain and cool new data centers to meet surging demand.

Storj encrypts the data and breaks files into small pieces before distributing them, ensuring security and also allowing for CDN-like global performance. And because only a fraction of the pieces are required to retrieve a file, Storj can deliver enterprise-grade availability and durability without the need to replicate and pay to store multiple copies..

Watch Storj’s full RoachFest 2023 presentation to get all the details

What Storj needed in a metadata database

Orchestrating a distributed data storage system this complex at scale requires a heavy reliance on metadata. Going into building the application, Storj knew that this would require a database that could offer:

Strong consistency

High performance

Horizontal scalability

Ultimately, Willoughby says, their decision also hinged on a few additional factors:

Postgres wire compatibility – the engineering team had already started building with Postgres, and they wanted to be able to move to their final database solution relatively smoothly.

Open-source – because Storj itself is open-source, partnering with a database company that shared the same ethos made sense (and Storj also wanted the ability to run their database on-prem).

Established – for such a critical part of their infrastructure, Storj wanted to choose a product that was proven.

The Storj team considered a wide variety of databases, including traditional Postgres, sharded Postgres (Citus), NoSQL databases, Spanner, Yugabyte, TiDB, and CockroachDB. Of those, the only one that ticked all of their boxes was CockroachDB.

The results: Storj with CockroachDB by the numbers

Storj has been a CockroachDB user for years, and the results speak for themselves. The company is now deploying CockroachDB across nine separate cloud regions – three in the US, three in Europe, and three in Asia. As of this fall, one of its larger CockroachDB clusters contained over five billion rows, and was handling an average of 5k read ops and 1.2k write ops per second, “with bursts, of course, much higher than that,” Willoughby says.

It is a testament to both the quality of Storj’s engineering team and the features of CockroachDB that Storj has realized massive growth in the years since adopting CockroachDB without experiencing a major “success disaster”. “We’ve delivered on the promise of horizontal scalability,” Willoughby says. “It’s really nice when you have those 10x events that just kind of sneak up on you as your company grows, and you don’t notice because all you did is [go] into your managed CockroachDB cluster and click scale up, and then you were fine.”

Willoughby also noted at RoachFest: “by the end of [2023], we’ll have had another 2x or 3x load increase.” That kind of load increase isn’t a big deal for Storj’s operations team, he says, in part because their choice of CockroachDB’s managed service allows Storj to “focus on building and delivering our product, rather than managing and scaling a database.”

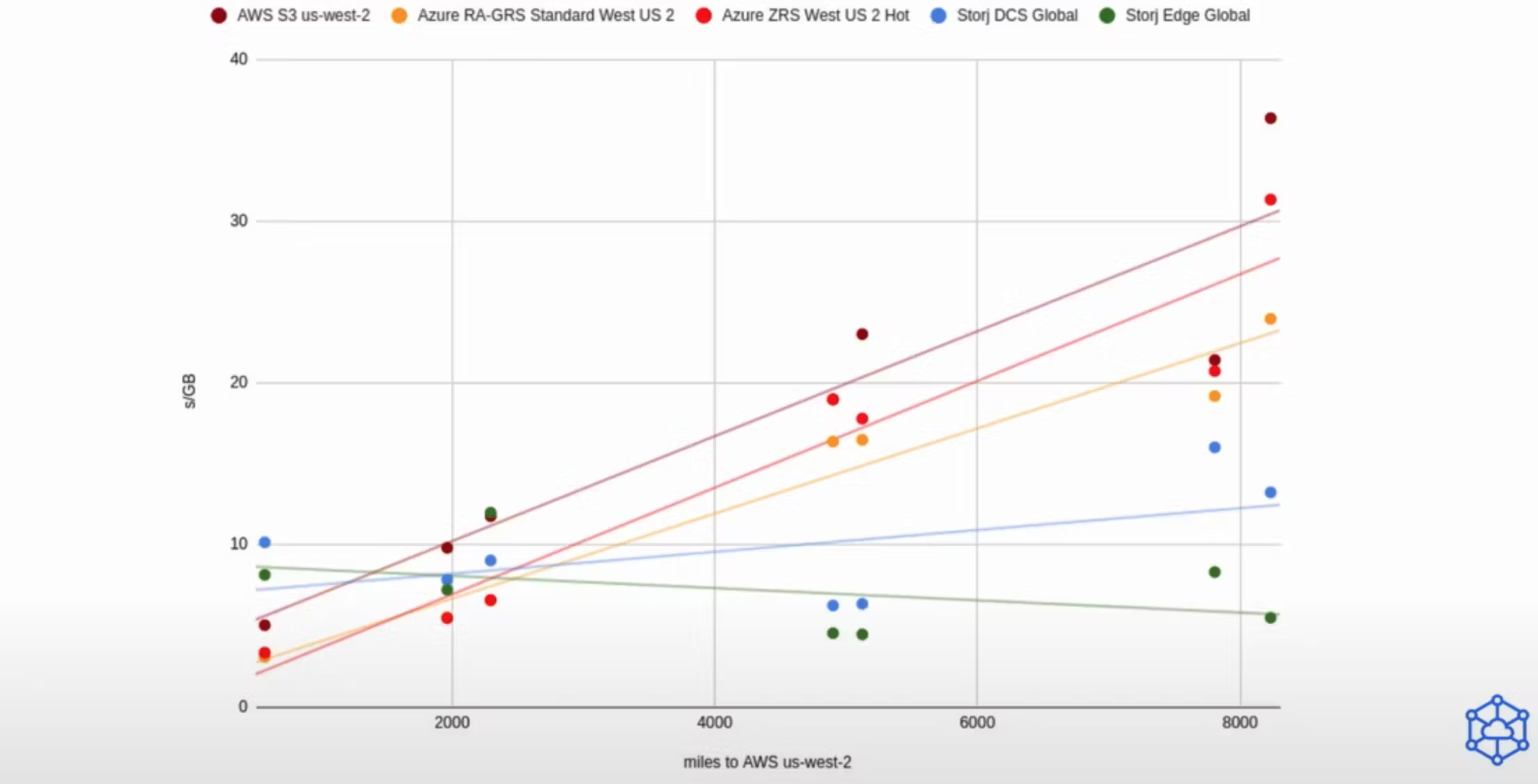

The end result? Willoughby shared a slide comparing performance downloading a 1GB file from AWS, Azure, and Storj. It shows that while AWS and Azure performed faster when downloading from location close to the cloud region where the data is located, as the distance increases the performance of the traditional cloud providers degrades, whereas Storj does not:

How did they make that happen?

Learn more about how Storj works and how CockroachDB has helped them “do the impossible,” in the words of Jacob Willoughby: